The bottleneck in AI-assisted software development is changing. A single prompt is no longer the main constraint. Once teams use more than one coding assistant, the hard questions become operational: Which specification is current? Which agent owns which task? Which changes have been verified, and which are only generated output?

That pressure creates a new layer in the stack: AI orchestration.

The bottleneck moves from writing to control

GitHub Copilot, Cursor, Aider and Claude already show how capable individual coding assistants have become. They can suggest code, edit files, run commands, write tests and sometimes complete entire task packages.

The real problem starts one layer above that. When multiple agents, branches, reviews and sessions run in parallel, a good chat interface is not enough. Teams need to know who is working on what, where the implementation is being checked against the specification, and which changes are actually safe to merge.

What an orchestration layer does

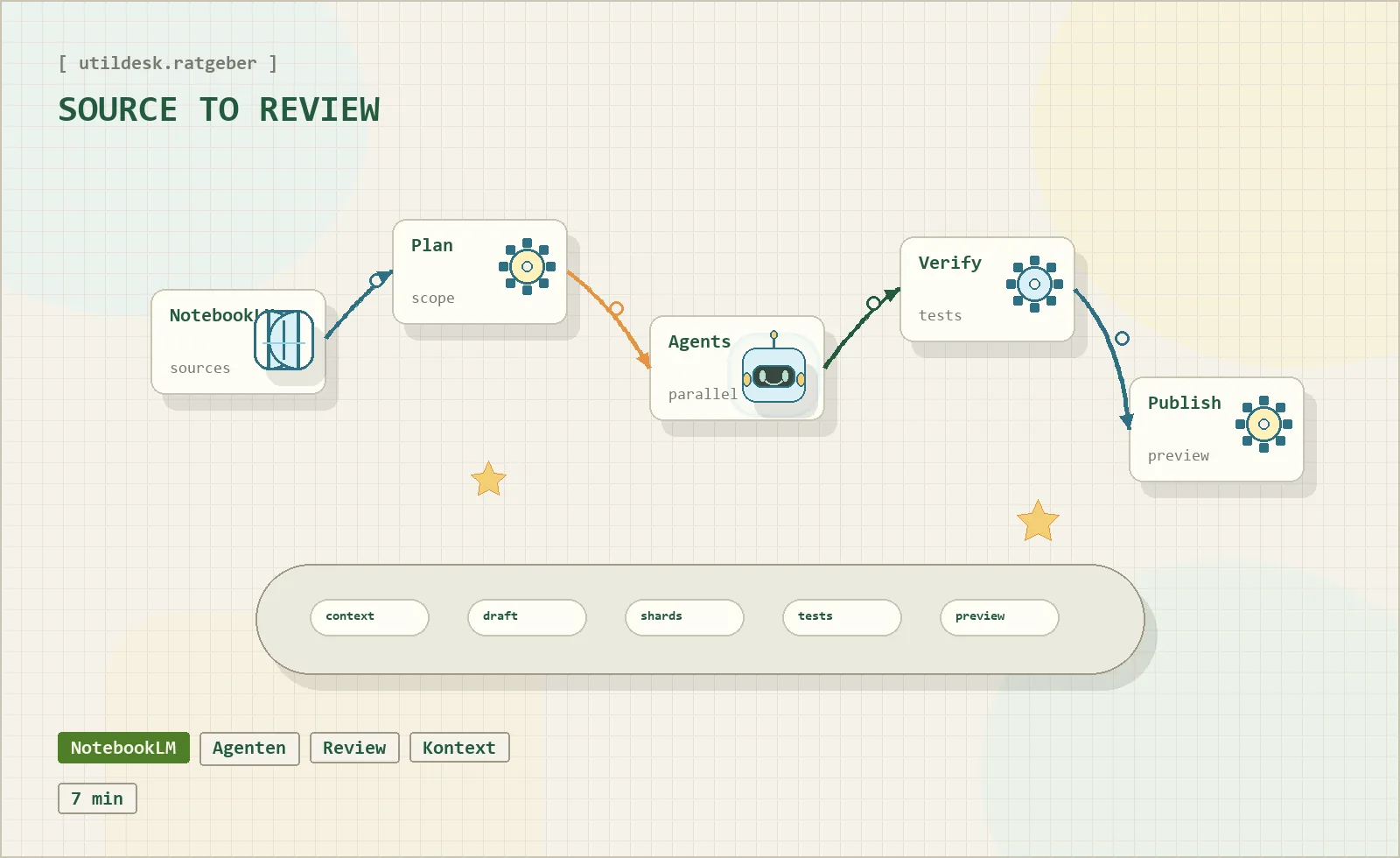

An orchestration layer does not replace developers. It structures the work of several agents around a shared goal. In practice, that means four building blocks:

- a living specification that humans and agents can both reference,

- a planning step that breaks work into useful slices,

- isolated work contexts so parallel changes do not overwrite each other,

- a verification and review loop before generated code becomes a pull request.

This is why Git becomes strategically important again. Worktrees and isolated branches are not glamorous, but they are essential when several agents touch the same repository. Without isolation, agentic development quickly turns into one large, unreviewable diff.

Frameworks such as LangChain and CrewAI point in the same direction. They are not just "another chatbot". They try to coordinate agents, state, tools and guardrails into a reliable operating model.

Verification matters more than another assistant

The more AI-generated changes appear at the same time, the less useful a purely manual review becomes. A diff can look clean and still miss the actual goal. It can pass isolated tests and still violate an architectural constraint.

That is why newer orchestration ideas focus on verification before the pull request. The question becomes: Does this implementation satisfy the living specification? Are the assumptions visible? Did the agent prove the change in the right environment?

Good orchestration is not measured by how much work it automates. It is measured by how well it reduces review load without hiding responsibility.

Visibility becomes a product feature

With more agents, teams need visibility into sessions, decisions and intermediate states. Which run is still open? Which decision was made in which session? Where can a developer resume a long-running task without rebuilding the full context?

This is why session management, resumability and audit trails become part of the product surface. Agentic productivity is not only generation speed. It depends on how well a team can control context over longer work runs.

Relevant tools on Utildesk

If you want to compare the topic through real products, start with these entries:

- Claude for long-context agentic coding sessions,

- GitHub Copilot for editor-native assistance,

- Cursor for an agentic IDE workflow,

- Aider for Git-oriented terminal sessions,

- LangChain for orchestration patterns,

- CrewAI for multi-agent collaboration.

Bottom line

AI orchestration is the layer that turns isolated assistant output into manageable engineering work. It makes context, ownership, verification and review explicit. Without that layer, teams may generate faster than they can understand. With it, agentic development has a path toward production-grade workflows.