Classic SEO tools often tell you how humans and search engines reach a website. They are less useful when the question is: Which AI systems are consuming server capacity, extracting content or using the site as background material for generated answers?

That question is becoming operational. Website owners need to understand, identify and control AI traffic in real time.

Relevant tools on Utildesk

If you want to connect this topic to practical tooling, start with these entries:

- Claude for long-context AI work and agentic coding sessions,

- GitHub Copilot as the editor-native assistant layer,

- Cursor for AI-first development workflows,

- Aider for Git-based terminal work,

- LangChain for agent orchestration patterns,

- CrewAI for collaborative agent flows.

Analyze AI traffic before you block it

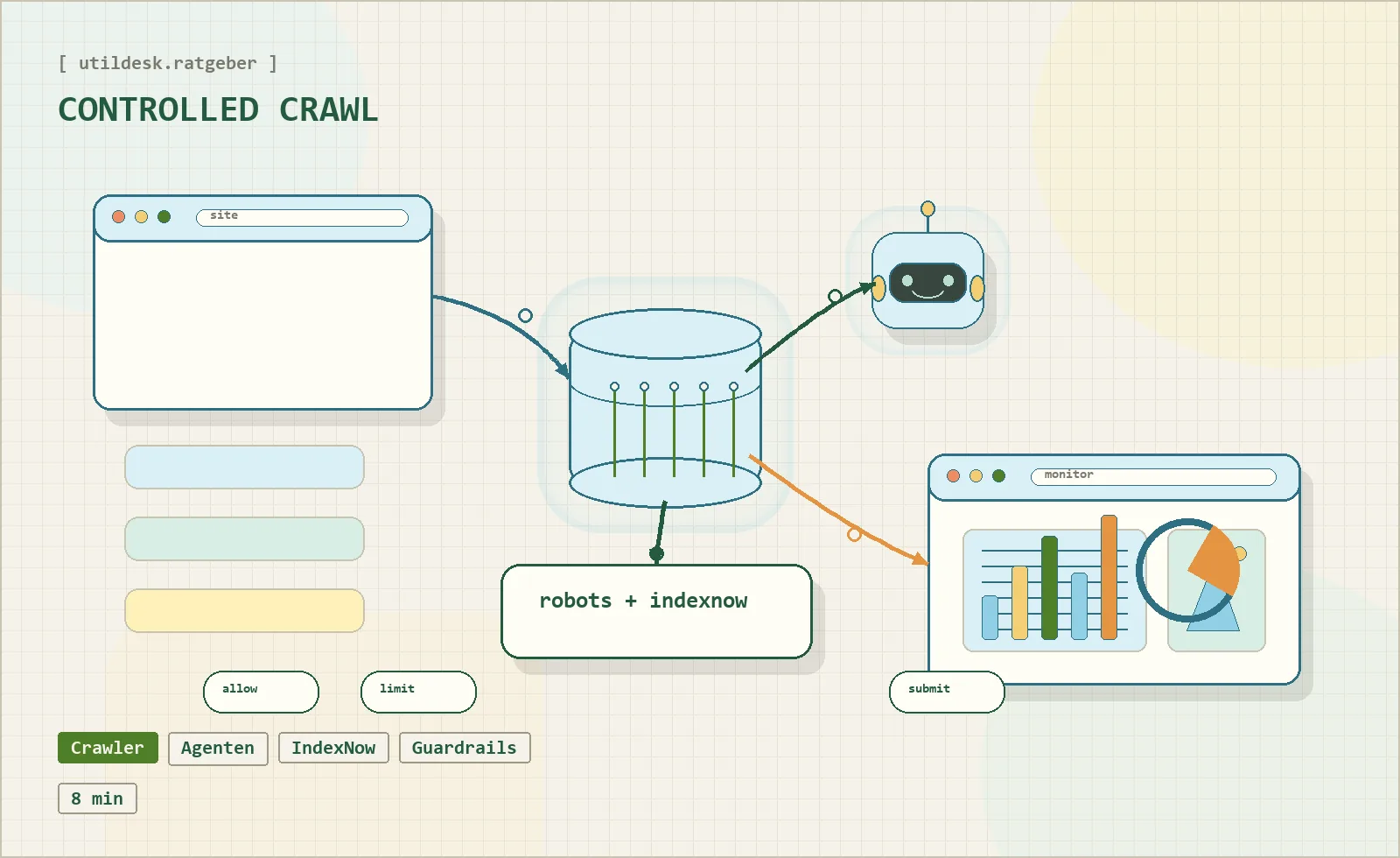

The first step toward an agent-ready website is visibility. You need to know which visitors are people, which are useful search bots and which are aggressive AI crawlers.

Modern crawl-control tools help operators see which AI services request which directories, how often they return and how much load they create. That visibility matters for publishers, ecommerce platforms and documentation sites, because not every crawl has the same value.

Some crawlers may help visibility. Others may consume resources without providing meaningful traffic or attribution. Without traffic-level context, any policy is guesswork.

Use update signals instead of waiting for crawlers

Traditional crawling is inefficient. Bots repeatedly check pages because they do not know when something changed. For AI search and answer engines, that can mean stale information or unnecessary load.

Crawler hints and protocols such as IndexNow move the site toward proactive communication. Instead of hoping that a bot returns at the right moment, the site can send update signals when content is created, changed or removed.

For operators, this is not only an SEO feature. It is an infrastructure feature: fresher data, lower wasted crawl budget and more predictable server load.

Structured data still matters

AI agents need context. Structured data helps them understand what a page is: a tool profile, a guide, an article, an organization, a breadcrumb trail or a software entry.

JSON-LD remains the practical format because it keeps machine-readable information separate from visible text. For a directory like Utildesk, structured data can clarify tool names, categories, pricing signals, related articles and canonical URLs.

The goal is not to stuff pages with schema markup. The goal is to make the important entities explicit and consistent.

Set boundaries for extraction

AI visibility is valuable, but uncontrolled extraction is not. A mature strategy defines what should be crawlable, what should be noindexed, and which internal endpoints are meant for machines but not for search results.

That means robots rules, X-Robots-Tag headers, canonical URLs, noindex for internal APIs where appropriate, and clear separation between public content and admin or review areas.

Practical checklist

- Identify AI crawlers and separate them from human traffic.

- Keep sitemap, canonical URLs and structured data consistent.

- Use update signals such as IndexNow for important changes.

- Keep internal review tools and machine APIs out of the public index where needed.

- Decide which content should be used by AI systems and which should remain protected.

Bottom line

An AI-ready website is not just optimized for classic search. It is observable, machine-readable, controlled and explicit about boundaries. The winners will be sites that give useful agents enough structure to understand content while keeping ownership, server load and publication control intact.